Tuesday

Nov172009

Design Thinking

Tuesday, November 17, 2009 at 6:41AM

Tuesday, November 17, 2009 at 6:41AM Dev Patnaik's recent post at Fast Company.com about reinventing the MBA caught my eye this morning. In an interview of Roger Martin, of the Rotman School of Management, they discuss the idea of bringing Design Thinking into the mix of what a business degree should include. The discussion is an excellent one, and if you don't have the time to listen to it in the video, at least read Dev's summary at the Fast Company site.

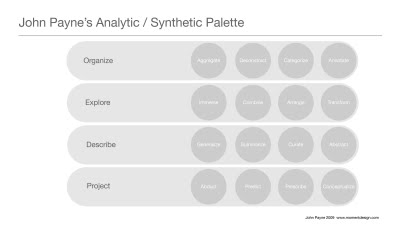

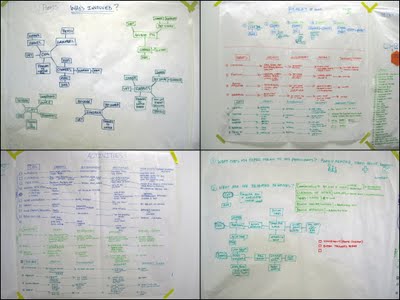

This idea, Design Thinking (which I define as the sort of creative problem solving / lateral thinking / & so forth taught in many—but most definitely not all— design schools), looks like the new darling of the business press, and I welcome that. The more we can integrate this sort of thinking into all of our problem-solving processes, the better off we will be. But when I reflect on what's missing in today's business management, I see another, perhaps more important omission.

I think we're long overdue for a renaissance of the ideas of Peter Drucker. On my drive home yesterday I caught the public radio program, Marketplace, and heard Kai Ryssdal's interview of Harvard Business School's Rosabeth Moss Kanter, who has written an article about the continuing relevance of Drucker's ideas in this month's Harvard Business Review.

This week we are celebrating (along with the 103rd birthday of Eva Zeisel, of course) the 100th anniversary of Drucker's birth. Most will know about Drucker, who was considered the father of business management. I found this short interview an excellent review of Drucker's ideas, some of which we are in sore need of today:

As Kanter says, "First was the importance of a company having a sense of mission or a purpose, and that's not identical with its strategy, it's not identical with its business model, it's why it exists and what social good or greater good that it's serving." Most important, he did not hold that management should concern itself solely with serving shareholder needs: " He talked about all the responsibilities of management, so shareholders were certainly one for businesses but also employees, customers, suppliers, and society in general.

Ryssdal: what Drucker would say about "the context that a lot of businesses find themselves in today of really having to cut their costs and get their share price up, maximize their profitability?"

Kanter: "Peter was a very big believer in management by objectives. ...you know what your goals are and then you organize to get those goals met, which means to that you do have operate efficiently. But it also means that you don't sacrifice the long term for the short term. So ever since he started writing about high CEO compensation in the 1980s, he said that companies were often not fair. They often did have resources, but they were concentrated at the top. And that letting the shareholders, but also executives, walk away with the lion's share of the profits rather than reinvesting them, that would not create a productive future for business."

So my question is, who is enacting Drucker's ideas today?

Katherine Bennett | Comments Off |

Katherine Bennett | Comments Off | tagged  Peter Drucker,

Peter Drucker,  business managment,

business managment,  design,

design,  design education,

design education,  zeisel

zeisel

Peter Drucker,

Peter Drucker,  business managment,

business managment,  design,

design,  design education,

design education,  zeisel

zeisel